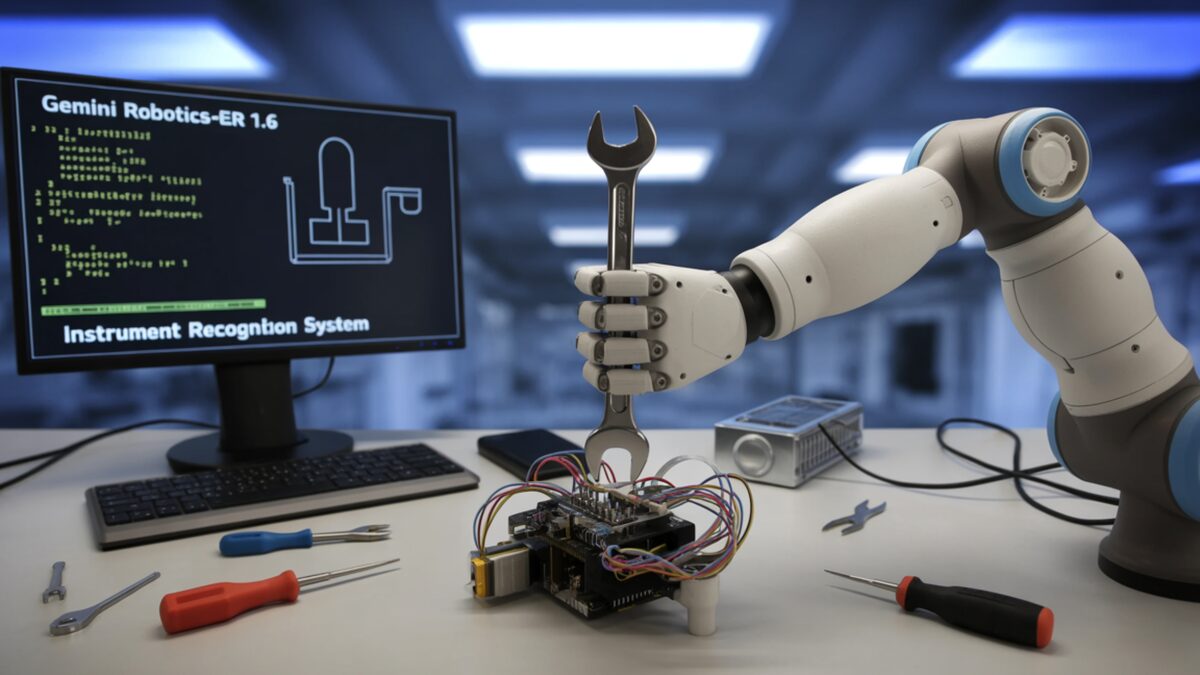

Gemini Robotics-ER 1.6 is the brain, not the hands. Here's how the new two-model robotics stack works.

Google DeepMind's Gemini Robotics-ER 1.6 jumps instrument reading from 23% to 93% and slots in above a separate VLA model. Boston Dynamics' Spot is already running it.

Google DeepMind released Gemini Robotics-ER 1.6 on April 14, an update to its “embodied reasoning” line of models for robotics. The headline benchmark: on industrial instrument reading (gauges, pressure readouts, sight glasses), ER 1.6 goes from 23% accuracy in the previous version to 86% solo and 93% with agentic vision. Boston Dynamics’ Spot is already using it for facility inspection rounds.

Why you’re hearing about robotics from an AI lab

Embodied AI is where a lot of the 2026 frontier-model money is going, and DeepMind, OpenAI, and the VLA startups (Physical Intelligence, Skild, Figure) are converging on the same architecture: a big reasoning model on top that decides what to do, and a smaller specialized model underneath that actually moves the joints. ER 1.6 is DeepMind’s bid for the top half of that stack. It’s also, not coincidentally, the first Gemini variant with real industrial customers (through Boston Dynamics’ Spot product) rather than demo-lab-only work.

What “embodied reasoning” actually means

An ER model is a vision-language model specialized for questions a robot needs to answer before it acts. Where is that object, pixel-accurate. What’s in front of or behind what. Has the task succeeded yet or not. Can this action be done safely given what’s visible. DeepMind’s blog post groups the capabilities into three buckets:

- Pointing and counting: precise object localization, trajectory mapping, and “is there a second one” style questions.

- Success detection: look at a scene across multiple camera streams and say whether the task is finished, partially done, or failed.

- Instrument reading: the new capability. Read an analog gauge, a digital readout, or a sight glass off camera.

ER 1.6 doesn’t emit motor commands. It emits plans and answers. The motor commands come from a separate model lower in the stack.

The two-model architecture matters more than the benchmark

Here’s the bit that’s going to define the next year of robotics API design. Google’s hierarchy, as MarkTechPost laid out, puts ER 1.6 on top as the “strategist” and Gemini Robotics 1.5 (a VLA, or vision-language-action model) underneath as the executor. ER 1.6 natively calls tools: Google Search, the VLA itself, and any user-defined functions the developer wires up. The VLA receives a visual input and a prompt like “pick up the red mug on the counter” and produces the actual joint-angle sequence.

This split is important because VLAs are expensive to train, have to generalize across embodiments (arm, humanoid, dog, mobile manipulator), and benefit from being kept relatively stable. Reasoning models iterate faster. Splitting them means you can upgrade the brain (ER 1.6 today, ER 1.7 in six months) without re-certifying every robot’s motor stack.

Every serious robotics lab is now building some variant of this split. DeepMind’s version is the one with the biggest partner footprint today.

The 23 → 93 jump isn’t actually as clean as it sounds

The instrument-reading benchmark comparison needs a caveat. ER 1.5 scored 23%. Gemini 3.0 Flash, a general-purpose non-robotics model, scored 67% on the same task. ER 1.6 hits 86% solo, 93% with agentic vision (where the model can crop and zoom into the specific dial it’s trying to read). The takeaway isn’t that embodied reasoning models got 4x better in six months; it’s that the previous-generation ER model was worse at this specific task than a general-purpose Gemini, and the new one finally closes and passes that gap.

That’s still a meaningful result. Boston Dynamics has been trying to make Spot’s facility-inspection pitch (read the gauge, flag the anomaly, move on) land for three years, and the reading accuracy was the blocker. Going from unusable to usable is the real story.

How it compares to what Anthropic and OpenAI are doing

Neither of the other two top-tier AI labs has shipped a robotics API yet, but both are visibly building. Anthropic’s work is still internal; the public signal is the Claude Opus 4.7 vision-model improvements and hiring in robotics roles. OpenAI’s Figure partnership is producing humanoid demos but not a developer-callable model. Physical Intelligence (pi-0, pi-0.5) is shipping a VLA but no equivalent reasoning layer on top.

The effect of DeepMind being first is that the reference design now exists. When Anthropic or OpenAI do ship, they’ll either build to the same two-model split or they’ll have to justify why not. That’s not a small thing; architectural coordination around a working product is how industry standards get locked in. The last time this happened in AI was the OpenAI chat-completions API shape, which became the de facto standard even though nothing about it was standards-body blessed.

What’s not in this release

Worth flagging the absences. There’s no new VLA; Gemini Robotics 1.5 is still the action model. There’s no pricing shift; ER 1.6 runs on standard Gemini API rates, which means the model is sized to be cheap enough to call in a tight loop for real-time robotics use. There’s no public third-party eval of the instrument-reading numbers yet. And there’s no commitment on model-weight release; ER 1.6 is API-only, not open-weight, same as every other Gemini variant.

For developers, that last one matters. If you want on-device robotics inference (latency-sensitive, offline, or regulated), you still have to go to an open-weight VLA like Physical Intelligence’s pi-0.5 or Nvidia’s GR00T and build your own planner. ER 1.6 is a cloud-API reasoning model, full stop.

Safety is in the release notes

DeepMind flagged ER 1.6 as their safest robotics model so far, citing a +6% text and +10% video gain over Gemini 3.0 Flash on physical-hazard identification. Whether “safest model the lab has shipped” matters depends entirely on the deployment context; for a Spot robot in an oil refinery, identifying hazards correctly matters a lot more than an API rate limit. The published numbers are internal DeepMind evals, not third-party, so the usual caveats apply.

Why you’re hearing about this now

Two reasons. First, Anthropic and OpenAI both have robotics teams building toward the same two-model architecture, and neither has shipped an API yet. DeepMind being first out with a pricing-listed, Gemini-API-accessible embodied reasoning model sets the reference design. Second, Boston Dynamics’ facility-inspection market is finally ready to become a real product; there’s demand for gauge-reading robot dogs from energy, utilities, and heavy industry that has been blocked on this exact capability.

What this means for you

If you’re building on any robot platform, start here: pull up the ER 1.6 Colab, feed it a photo of your target scene, and benchmark the pointing and success-detection outputs against whatever you were doing before. You don’t need a robot to evaluate the model. It’s a vision-language API. If it looks meaningfully better than your current planner, wire it above your existing action stack as a planner-style tool call.

If you’re evaluating the robotics vendor landscape, Boston Dynamics’ Spot just got a real software upgrade, not just a marketing one; that changes the TCO math on facility-inspection deployments. Energy and utilities buyers who were in pilot mode should now look at moving to contract, because the gauge-reading blocker is gone.

And if you’re running a robotics lab, this release is the clearest public signal yet that the two-model (reasoner + VLA) split is becoming the consensus architecture. Your own stack should probably match it. If you’re planning to compete head-on with Gemini Robotics, the bar is now: do better than 93% on instrument reading, beat Spot’s inspection uptime, or undercut the Gemini API price by a lot. Anything short of that and you’re shipping into a DeepMind-shaped niche.

Sources

- Gemini Robotics ER 1.6: Enhanced Embodied Reasoning — Google DeepMind

- Google DeepMind Releases Gemini Robotics-ER 1.6: Bringing Enhanced Embodied Reasoning and Instrument Reading to Physical AI — MarkTechPost

- DeepMind's Gemini Robotics-ER 1.6 Lets Spot Read Gauges — WinBuzzer

- Google DeepMind releases Gemini Robotics-ER 1.6 — TestingCatalog

Frequently Asked

- What is 'embodied reasoning' and how is it different from a regular Gemini model?

- Embodied reasoning is Google's term for a vision-language model fine-tuned to answer questions a robot would ask before moving: where is the object, what's occluded, is this action done, is this gauge reading 22 PSI? ER 1.6 is a reasoning model. It doesn't emit motor commands itself.

- Is ER 1.6 the same as a VLA (vision-language-action) model?

- No. It sits above a VLA. Google's architecture puts ER 1.6 on top as the strategist, picking what to do next, and Gemini Robotics 1.5 (the VLA) underneath translating that into actual joint commands. The brain plans; the VLA moves.

- What's the 93% instrument-reading number actually measuring?

- Google's internal benchmark of reading real industrial gauges, sight glasses, and pressure readouts off camera. ER 1.5 hit 23% on the same suite; ER 1.6 hits 86% solo, 93% with agentic vision (meaning it can crop and zoom into the dial itself). Boston Dynamics' Spot uses this for facility inspection rounds.

- Can I actually use this model?

- Yes. ER 1.6 is live in the Gemini API and Google AI Studio as of April 14. A Colab notebook with examples is in the DeepMind blog post. You don't need a robot to call it; it works on static images for spatial reasoning tasks.

- Does this work with non-Google robots?

- In principle yes, because ER 1.6 is an API model, not firmware. Google demonstrated it on Boston Dynamics Spot and showed partner integrations, but there's nothing Google-hardware-specific about calling the API from your own robot stack.