Hyperscalers are on track to spend $700B on AI infrastructure in 2026

Big-tech AI capex is projected at $700B in 2026, up from $410B in 2025. Microsoft alone guided $190B. Wall Street is split: Meta got punished for the spend, Alphabet rallied.

Microsoft alone is guiding $190 billion of AI capex for 2026. Combined hyperscaler spend is on track for $700 billion, Fortune reported on April 30, up from roughly $410 billion last year. Meta’s stock fell on the plan; Alphabet and Amazon rallied on cloud growth. No one offered a public answer for when the buildout ends.

The numbers are big enough to feel abstract, so the way to read them is in two layers. Layer one is what’s happening to the AI runtime: cloud capacity is being added at a rate that pulls forward the next four years of GPU, DRAM, and substation demand into a single year. Layer two is what’s happening to the companies funding it: the spending is so large that it has visibly bifurcated investor reactions. The same earnings season that pushed Alphabet’s stock up because of $20 billion in Cloud revenue pushed Meta’s stock down because the underlying infrastructure bill exceeded what analysts were already pricing in.

The math under the headline

The $700 billion figure is Fortune’s tally of disclosed and projected AI infrastructure capex across the four largest hyperscalers, Microsoft, Alphabet, Amazon, and Meta, with smaller contributions from Oracle and others. Combined Q1 capex across the four cleared $130 billion, primarily on data-center buildouts.

Microsoft is the cleanest data point. The company reported FY26 Q3 results on April 29: $82.89 billion in revenue, Microsoft Cloud above $54 billion, and an AI business at a $37 billion annualized run rate, up 123%. Q4 capex is expected to clear $40 billion, with calendar-2026 guidance of $190 billion, up 61% from 2025. Roughly $25 billion of that increase is component-cost inflation; the rest is silicon and steel.

Alphabet’s Q1 added the demand-side picture. Google Cloud booked $20.03 billion in revenue (up 63%), and Pichai disclosed a contracted-but-unrecognized backlog of more than $460 billion. That backlog is the line item that justifies the spend: customers have committed to buy enough cloud capacity over the next several years to make the buildout look pre-sold.

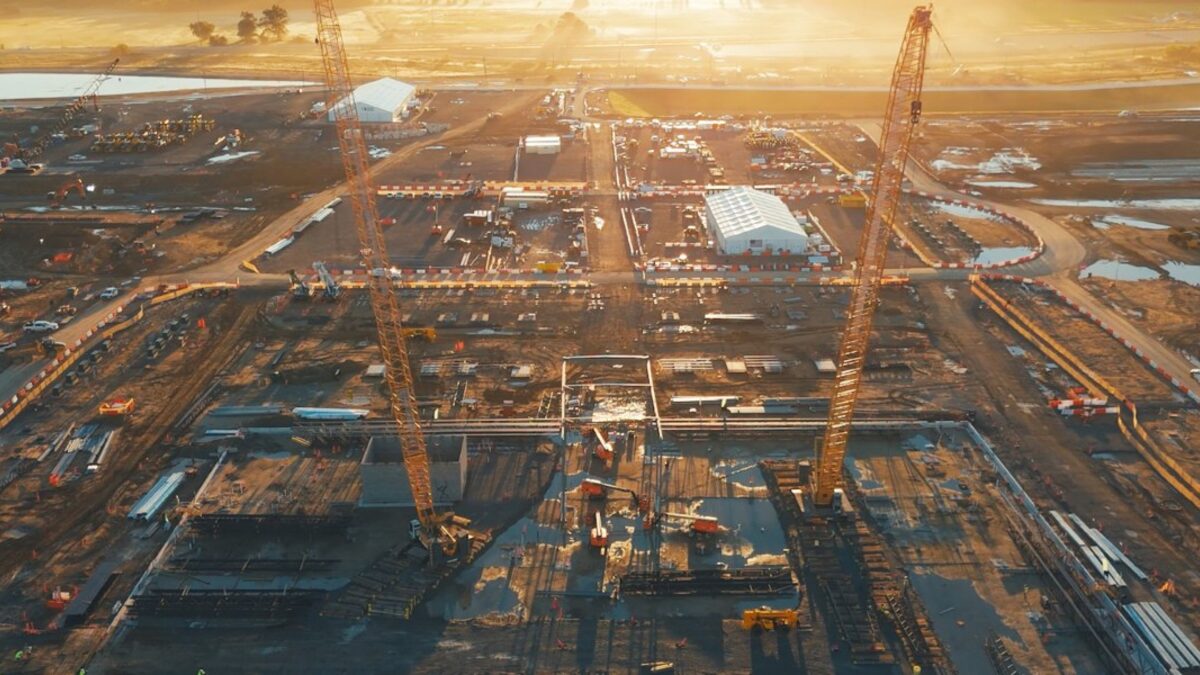

Meta’s contribution is the one Wall Street is reading hardest. Fortune cited Meta’s $27 billion Hyperion data center project in northeast Louisiana as an emblematic example, with some estimates suggesting the campus will run “millions of GPUs” once it ramps. The market response was negative: investors pushed Meta’s shares down on the magnitude of the program, signaling that the equity market is now willing to penalize unbounded AI capex even when revenue is growing.

Why the buildout has no public end date

McKinsey research cited by Fortune projects global AI capex will require “$6.7 trillion worldwide” by 2030 to keep pace with computing demand. The four hyperscalers are rounding the corner from “we are funding the buildout from cash flow” to “we are funding the buildout from cash flow and equity, and the equity holders have noticed.”

What’s not in the public commentary, even after this earnings cycle, is a payback model. None of the CEOs on the four calls offered a year in which AI capex normalizes. Satya Nadella framed it as TAM expansion in the Microsoft prepared remarks. Nadella stated that “the most exciting things are plug-ins in Word or Excel, or CLIs in coding,” which is a story about new revenue streams, not about saturating the existing ones. Pichai’s TPU disclosure on the Alphabet call is a similar story; selling silicon to outside customers is what you do when internal demand is outpacing your ability to supply the inside.

What this means for you

If you’re an engineer, the practical effect is on your supply chain. Memory chip pricing is the most visible second-order consequence: AI demand is the reason Apple is warning about RAMageddon, the reason Samsung’s Q1 was a record, and the reason your laptop refresh quote went up. Plan device upgrades around it.

If you’re a product or platform owner shipping on hyperscaler infrastructure, the buildout protects you from capacity shortages but raises the floor on inference cost. Lock in committed-use discounts where you can. Negotiate now while the hyperscalers are competing on enterprise share, not later when the backlog tightens.

And if you’re tracking the macro story, the chart to keep on your desk is the gap between hyperscaler capex and free cash flow. Microsoft’s spending the equivalent of two years of net income on AI in 2026. Alphabet is in the same shape. The day a CFO names a year for that ratio to come back down is the day the market reprices the whole sector. It hasn’t happened yet.

Share this article