An AI agent built a working RISC-V CPU from a 219-word prompt in 12 hours. Here's what it actually did.

Verkor's Design Conductor agent went from a 219-word spec to a tape-out-ready RISC-V core called VerCore in 12 hours. The catch: it's still a Celeron.

A startup called Verkor says its agent went from a 219-word requirements document to a layout-ready RISC-V CPU in 12 hours. The chip, called VerCore, met timing at 1.48 GHz on a simulated 7nm process and scored 3,261 on CoreMark, per IEEE Spectrum. That’s the rough performance class of an Intel Celeron from 2010. It’s also the first time anyone has reported a fully autonomous run from spec to tape-out-ready GDSII.

If you’ve heard “AI is designing chips” before, you’ve heard it about RTL generation, layout placement, or PPA optimization, where AI helps a human engineer at one stage. What Verkor’s Design Conductor paper describes is different: one agent, one prompt, one button. Spec in, GDSII out.

What Verkor actually shipped

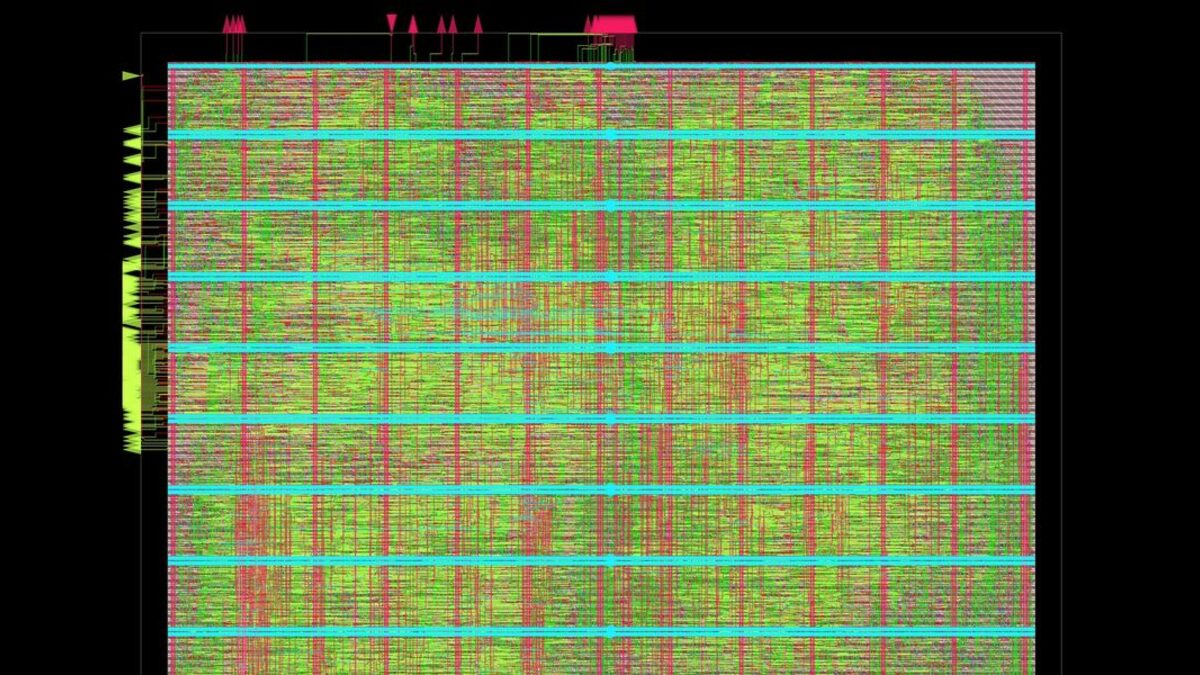

The team gave Design Conductor a 219-word spec for an rv32i-zmmul core. That’s a 32-bit RISC-V base ISA plus the multiply extension. No floating point, no atomics, no virtual memory. Twelve hours later, the agent produced VerCore: a five-stage, in-order, single-issue pipeline with caches and a complete physical implementation through synthesis on the open ASAP7 PDK.

The numbers, from the paper’s abstract on arXiv:

- 1.48 GHz clock at sign-off on ASAP7 7nm.

- CoreMark 3,261, which Spectrum benchmarks against an Intel Celeron SU2300 from 2011.

- rv32i-zmmul ISA, verified against the Spike reference simulator.

- 12 hours of agent runtime, end to end.

Co-founder Ravi Krishna told IEEE Spectrum that what the agent could pull off “improved significantly” over the months they iterated. The same paper notes Design Conductor explored multiple microarchitectural variants on the way to VerCore, not just one.

Two caveats matter. First, no silicon. ASAP7 is a research PDK, not a foundry tape-out. The chip exists as a synthesized layout file, not as a wafer. Second, no Linux yet. The 219-word prompt asked for a CPU, not a system, and rv32i-zmmul is too small to boot Linux. The paper’s title hints at the next step (“1.5 GHz Linux-capable”) but the demo is the integer core.

How “fully autonomous” really works

Plenty of agentic coding tools can wire up a backend in 12 hours. Hardware is harder for two reasons: the iteration loop is slower, and the failure modes are weirder. A flipped bit in your simulator can ship as a metastability bug in silicon, and you find out a year and $5M later.

Design Conductor wraps the conventional EDA stack (RTL generation, testbench creation, simulation, synthesis, timing closure, layout) and drives it the way a senior chip designer would. The agent writes Verilog, compiles it, runs Spike against the testbench, reads the failures, edits, re-runs. When it hits timing it tries different pipeline depths or register layouts and re-synthesizes. The paper credits the agent with completing every step except physical fabrication.

That doesn’t mean it’s a black box. Top engineers on the Hacker News thread flagged the same question every chip designer would: what counts as “completion,” and how much human correction sat behind the autonomous run? Verkor says the spec-to-GDSII flow was uninterrupted, but the team also acknowledges, in the paper itself, that pushing VerCore to a real fab still needs five to ten human experts on DRC, LVS, and timing closure for the foundry’s PDK.

If you’ve never taped out a chip, here’s the gap: synthesizing on ASAP7 is to taping out at TSMC roughly what cargo build is to shipping a kernel module that survives 100 million users. Both call themselves “complete.” Only one is.

Why this lands now

Three things converged. The first is Anthropic and OpenAI both shipping models with much longer effective context windows in March and April. Claude Opus 4.7 and GPT-5.5 can now hold a multi-thousand-line Verilog codebase and the full simulator log in a single thinking session. A 219-word prompt fits trivially; the iteration trace doesn’t fall out the bottom of the context.

The second is the maturation of the open EDA stack. ASAP7, Yosys, OpenROAD, and the open RISC-V testbenches mean an agent can now drive a full flow without a $1M Synopsys license. Every step of Design Conductor’s pipeline is open-source tooling.

The third is the cost curve on inference. Tom’s Hardware reports the run consumed “many tens of billions of tokens” for a “comparably simple design.” That was prohibitive 18 months ago at GPT-4 prices. At Sonnet 4.6’s roughly $3 per million output tokens, a 50-billion-token run is on the order of $100,000 to $200,000. That’s still real money, but it’s a few weeks of one engineer’s loaded cost, not a quarter of headcount.

Verkor plans to release VerCore’s RTL and build scripts by end of April and demo an FPGA implementation at DAC, the EDA industry’s biggest conference. That’s the next reality check: an FPGA implementation is much cheaper proof than a simulation, and DAC’s audience knows where the bodies are buried.

What it doesn’t change (yet)

Don’t read this as “AI replaces chip designers.” Read it as “AI takes a serious bite out of the integer-core spec-to-RTL stage of the EDA pipeline.” That stage is roughly 10 to 15% of the total cost of bringing a custom chip to market. Verification eats more, physical design eats more, and tapeout DRC/LVS/post-silicon validation eats more again.

VerCore is also a deliberately small target. A modern Apple A-series core has four-wide out-of-order issue, 192+ ROB entries, branch predictors with multi-level history, three levels of caches, vector and matrix extensions, virtualization, and a power-management state machine. The microarchitectural surface area is at least two orders of magnitude larger than a five-stage in-order RV32i. That gap won’t close with more tokens; it’ll need better tools, better verification, and probably a different agent architecture for hierarchical design.

The other open question is IP. Modern CPUs ship with hundreds of pages of patent licenses around branch prediction, cache coherency, prefetch, and side-channel mitigations. An autonomous agent that synthesizes plausible RTL has no concept of “patented in 2019 by Arm.” The first lawsuit over an AI-designed core that infringes a clean-room implementation will set the next chapter, not the next paper.

What this means for you

If you build software, this is interesting but not actionable yet. Custom silicon for your workload is still a 24-month, eight-figure project, and Design Conductor doesn’t change that. What it does change is the floor. A small team at an academic lab or a startup can now produce a real, simulated, modern-process RISC-V core in a week instead of a year. That’s how Berkeley’s BOOM and Rocket cores started; the pace of follow-on academic CPU work is about to get a lot faster.

If you work in EDA, the people to watch are the verification vendors. Cadence and Synopsys have leaned into AI-assisted verification because that’s where the cost actually is. An agent that can write RTL is great. An agent that can prove RTL doesn’t have a 1-in-10⁹ corner case is what saves you from a recall. The first lab to ship that wins the next decade.

My read: VerCore at 1.48 GHz on ASAP7 is a “Hello, world.” The interesting demo will be at DAC, on real silicon or real FPGA, when somebody tries to boot Linux on a Verkor-designed core. Until then, treat the headline as a milestone, not a turning point. We’ve seen this curve before with agentic coding; it bends slowly, then quickly.

TL;DR

- Verkor’s Design Conductor went from 219-word spec to a tape-out-ready RISC-V core in 12 hours.

- VerCore hits 1.48 GHz on a 7nm simulation, CoreMark 3,261. Roughly a 2010 Celeron.

- It hasn’t been fabricated. Five to ten human experts are still needed to push it to real foundry silicon.

Sources

- AI Agent Designs a RISC-V CPU Core From Scratch — IEEE Spectrum

- AI agent designs a complete RISC-V CPU from a 219-word spec sheet in just 12 hours — Tom's Hardware

- Design Conductor: An agent autonomously builds a 1.5 GHz Linux-capable RISC-V CPU — arXiv

- An AI agent just designed a complete RISC-V CPU from scratch in 12 hours — TechSpot

Frequently Asked

- What does 'tape-out-ready' actually mean?

- It means the design is a synthesized GDSII layout file ready to send to a foundry, but it has not been fabricated. The chip exists only in simulation on the open ASAP7 7nm research PDK, not on real silicon.

- Why is VerCore compared to a 2010 Intel Celeron?

- CoreMark 3,261 at 1.48 GHz roughly matches an Intel Celeron SU2300 from 2011. Modern Apple, AMD, or Intel cores score 30 to 80x higher per core because they are wider, deeper, and ship far more advanced branch prediction, caches, and vector units.

- Did the agent really do this with no human help?

- Verkor says the spec-to-GDSII flow ran without human intervention. They also say five to ten human experts will still be needed to push the design through real-foundry DRC, LVS, and timing closure for production silicon.

- Can VerCore boot Linux?

- Not yet. The published demo implements rv32i-zmmul, a 32-bit RISC-V base ISA without the privileged or memory-management features Linux needs. The paper's title flags 'Linux-capable' as the next milestone.

- How much did the run cost?

- Verkor has not published a number, but Tom's Hardware reports the agent consumed 'many tens of billions of tokens' even for a comparably simple design. At current frontier-model output prices, that's a six-figure compute bill.