Why your next RAM kit costs double: the AI memory crunch, explained

DDR5 contract prices jumped 100% since 2025, HBM is eating 23% of DRAM wafers, and the fabs that could fix it don't come online until 2028. Here's the shape of the crunch.

A 32GB DDR5 kit that sold for $150 in late 2024 is pushing past $400 today, and Samsung’s Wonjin Lee told Bloomberg the shortage is going to “affect everyone” through 2026. This isn’t a shipping snarl or a factory fire. It’s the AI buildout eating the memory industry’s wafer capacity faster than anyone can lay new fab.

Why the crunch is different this time

Memory has always been cyclical. The 2018 DRAM crunch, the 2021 NAND squeeze, and the 2023 glut all ran the same playbook: demand spikes, prices overshoot, fabs expand, supply catches up, prices collapse. The industry calls it the memory cycle, and you could set your watch by it.

The 2026 crunch breaks the pattern because the demand spike is structural, not cyclical. Nvidia, AMD, Broadcom, and Google all ship AI accelerators with HBM (high-bandwidth memory) stacked directly on the package. TrendForce reports that HBM now occupies 23 percent of the industry’s DRAM wafer output, up from 19 percent a year ago. The kicker: HBM is wildly less wafer-efficient than DDR5 because of the TSV (through-silicon via) processing, and each bit of HBM burns about three times the wafer area a DDR5 bit would. So a 23 percent HBM share feels more like 50 percent of the effective supply when you account for what those wafers could have produced.

That’s before you count GDDR7 for gaming GPUs and LPDDR5X for phones, both of which also skew server-side this cycle. TrendForce’s all-in math: AI is effectively claiming almost 20 percent of global DRAM supply in 2026.

The Q1 2026 price shock in numbers

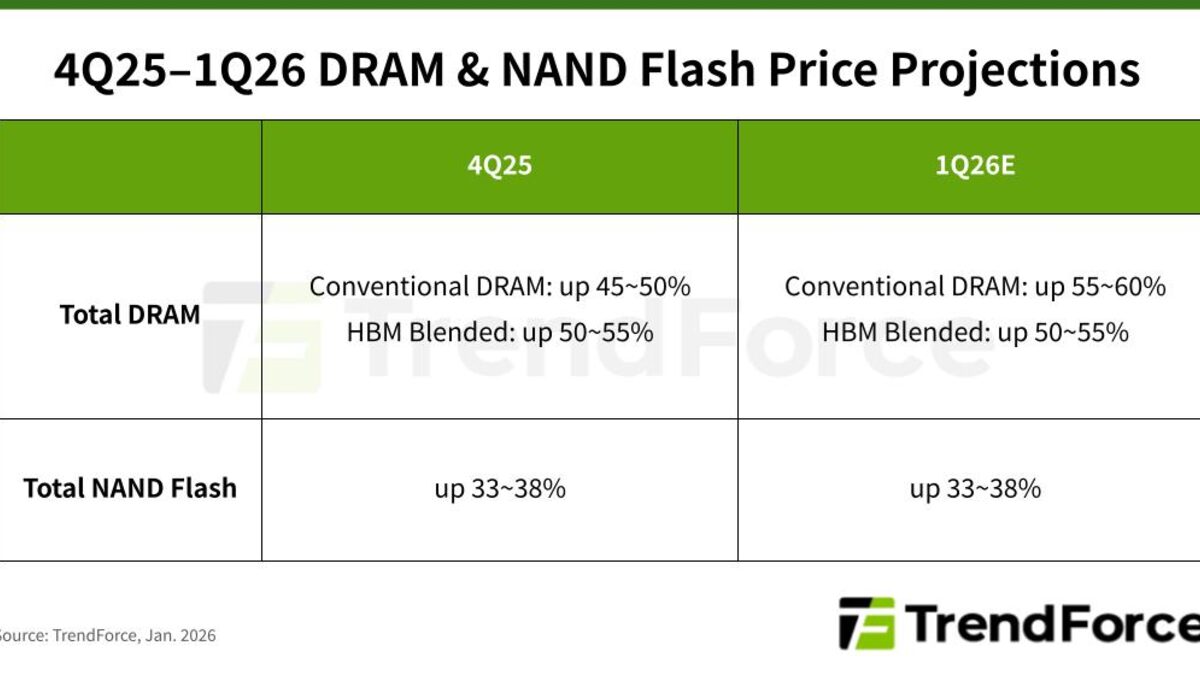

TrendForce’s January 5 advisory is blunt:

- Conventional DRAM contract prices: +55 to 60 percent QoQ.

- Server DRAM: +60 percent QoQ, with U.S. hyperscalers pulling in orders to lock supply.

- NAND flash: +33 to 38 percent QoQ across categories.

- Client SSDs: +40 percent QoQ or higher, because bit-supply got diverted to data-center SSDs.

- Mobile LPDDR4X/5X: tight despite lower seasonal demand, because makers are allocating away from phone OEMs.

Those are quarterly jumps on top of a 2025 that already saw DRAM prices roughly double. The 32GB Corsair Vengeance DDR5-6000 kit PC Gamer tracks went from $89.99 in November 2024 to $427 in early 2026. Retail DDR5 32GB kits are on a trajectory to clear $500 before they stop climbing, and the 64GB RDIMM server modules are expected to double versus early 2025 prices by the end of Q2 2026.

Gartner’s summary puts the knock-on at 17 percent on the average PC’s shelf price and a projected 10.4 percent drop in unit shipments for 2026, because a chunk of buyers just won’t replace hardware this year at these numbers.

Why the fabs can’t just make more

Every memory CEO is saying the same thing two quarters later: we’re sold out, and we’re not racing to add capacity. SK Hynix’s October earnings call confirmed HBM, DRAM, and NAND are “essentially sold out” for 2026. Micron told investors that AI memory demand is “unprecedented” and that it’s exiting the Crucial-branded consumer market entirely to serve enterprise and AI customers.

New fab capacity is slow. Samsung’s next leading-edge DRAM line won’t hit mass production until 2028. Micron’s New York and Japan facilities are on schedule for second-half-of-the-decade production. SK Hynix’s M16 ramp might land meaningful bits in late 2026 at the earliest.

And here’s the part the cycle-watchers keep flagging: Samsung and SK Hynix are openly telling analysts they want to “minimize the risk of oversupply.” Translation: they remember 2023, when prices cratered and balance sheets bled. They’re not going to over-expand this time. That’s rational behavior for them and terrible news for anyone hoping a cycle correction is six months away. Counterpoint Research’s current call is Q4 2027 as the earliest inflection point.

The server-first allocation is a tier system now

U.S.-based cloud service providers sign three-year committed purchase agreements and pay a premium for guaranteed allocation. That’s the tier that keeps getting served. Mid-market enterprise buyers on 12-month contracts get the second-tier allocation with longer delivery windows and spot-market exposure. Consumer OEMs and DIY retail are the last to get bits, and they’re paying the headline prices the gaming press is writing about.

This is what people mean when they say the memory market is bifurcating. A hyperscaler buying 64GB RDIMMs by the container pays less per gigabyte than you pay at Micro Center, and the container ships on time. PC Gamer’s reporting on Samsung and SK Hynix informing customers of another round of DRAM price increases in spring 2026 is about the mid-tier getting the next squeeze.

The tier system also shapes who even gets served. Mid-market buyers who walk in cold are routinely told lead times are eight to twelve weeks on modules that were one-week delivery in 2024. Some OEMs report being locked out of 64GB LRDIMM stock entirely until their existing long-term commit renews. And consumer board partners like Corsair, G.Skill, and Kingston are passing the spot-market cost straight through, because they’re buying the same bits everyone else is bidding for.

The downstream effects nobody’s pricing in yet

The memory crunch isn’t just a hardware-procurement problem. SaaS vendors that run inference-heavy workloads (Cursor, Replit, Claude-backed tools, the whole AI coding tier) pay HBM prices through their cloud bills, and those bills are already showing it in per-seat economics. Cloud providers don’t usually discuss SKU-level cost allocation, but the rising AI-gross-margin pressure on AWS and Azure earnings calls is the same story told from the finance side. Expect the big AI-coding products to announce pricing changes during 2026 as their own memory pass-throughs catch up.

What this means for you

If you’re about to build a PC, bite the bullet on memory now rather than later. Prices climb another 30 to 50 percent every quarter on current trajectories, so the Q3 2026 kit is going to be worse than the Q2 kit, not better. If you’re running servers and your hardware refresh is scheduled for 2026 or early 2027, go back to procurement and model the 60 percent server-DRAM hike against your budget today: the Meta and Broadcom custom-chip deal is the hyperscaler version of the same pressure. SaaS vendors are already telling customers to expect price pass-throughs, and that’s the signal to lock multi-year commitments on whatever memory-heavy services you actually depend on, while they’re still willing to quote fixed pricing.

If you’re looking at used DDR4 servers as a dodge, the high-density modules aren’t the savings play they used to be, since DDR4 retail is up 30 to 40 percent after a 60 percent 2025 jump. And if you’re a consumer who just wants a 32GB kit for gaming, the blunt advice is: don’t wait for a sale. The sale isn’t coming until late 2027.

Why you’re hearing about this now

Three things converged into this week’s news cycle. The Verge’s long-read on the shortage put mainstream framing on what the industry press has been writing for three quarters. Samsung’s earnings guidance walked the crisis into the finance headlines. And TrendForce’s January advisory and SK Hynix’s 8x DRAM production forecast, neither of which is enough to close the gap, gave the story a number everyone could cite.

The AI industry is used to talking about chip shortages in terms of accelerators. This one is about memory, and it’s less visible because RAM doesn’t ship in giant GPU boxes with Nvidia logos on them. But the constraint is hitting enterprise customers first, consumers second, and the capex plans of every AI lab that assumed HBM pricing would normalize. Expect the next earnings season to turn “our AI costs are higher than projected” into a recurring theme, and watch whether Counterpoint’s Q4 2027 inflection holds or slips another quarter.

Sources

- Memory Makers Prioritize Server Applications, Driving Across-the-Board Price Increases in 1Q26 — TrendForce

- Samsung warns of memory shortages driving industry-wide price surge in 2026 — Network World

- AI memory is sold out, causing an unprecedented surge in prices — CNBC

- AI Reportedly to Consume 20% of Global DRAM Wafer Capacity in 2026, HBM and GDDR7 Lead Demand — TrendForce

- Memory crisis and sky-high DRAM prices could run past 2028 — PC Gamer

Frequently Asked

- Why did RAM prices double in a year?

- Memory makers reallocated wafer capacity from consumer DDR5 and NAND to server DRAM and HBM for AI accelerators. HBM eats roughly three times the wafer area per bit that DDR5 does, so every gigabyte of HBM displaces three gigabytes of conventional DRAM from the line. TrendForce expects DRAM contract prices up 55 to 60 percent quarter-over-quarter in Q1 2026 alone.

- When will memory prices come down?

- Not soon. Samsung's new fab doesn't hit mass production until 2028. SK Hynix says HBM, DRAM, and NAND are 'essentially sold out' for 2026. Counterpoint Research puts the earliest price-inflection point at Q4 2027, and both PC Gamer and Samsung's leadership flag a crunch that could run past 2028.

- Is DDR4 safer than DDR5 right now?

- No. High-density DDR4 modules are up 30 to 40 percent year-over-year after a 60 percent jump in 2025, because memory makers are winding down DDR4 production and buyers are scrambling for the remaining stock. If you're building a PC, the math no longer favors staying on DDR4 for savings.

- Does this affect iPhones and Android phones?

- Yes, with a lag. LPDDR4X and LPDDR5X mobile DRAM supply is tight and suppliers are allocating away from phone OEMs toward cloud customers who sign long-term commitments. Gartner expects the broader memory surge to add roughly 17 percent to PC prices and push shipped units down 10.4 percent, and phone OEMs absorb similar structural costs.